Something fundamental has shifted in American power markets, and it happened faster than almost anyone predicted. Since ChatGPT launched in November 2022, spending on data centers in the US has exploded from $13.8 billion to $41.2 billion per year — a 200% increase in just three years, at a time when virtually all other construction activity in the country has slowed.

The scale of what's coming is staggering. Rystad Energy estimates the US has over 100 GW of data center demand coming online between 2024 and 2035. Global data centers already consume power at a rate roughly equivalent to the entire annual electricity consumption of France, and under the most likely growth scenario, that number doubles to around 945 TWh by 2030. Under an accelerated AI adoption scenario, it could hit 1,700 TWh by 2035, placing the sector on par with the energy footprint of India.

Grid planners are scrambling to keep up. Of the 166 GW in forecast peak load growth across the US through the end of the decade, roughly 90 GW, well over 50%, is tied to data centers alone. Utilities are receiving interconnection requests that in some cases more than double their existing peak loads. And the supply side isn't keeping pace. In fact, over 83 GW of dispatchable baseload generation is slated for retirement over the next decade, tightening an already stressed equation. The digital economy is running headlong into the limits of the physical grid.

Utilities are receiving interconnection requests that in some cases more than double their existing peak loads.

How Hyperscalers Are Chasing Power

For the hyperscalers building this infrastructure, power isn't just an operating cost — it's the binding constraint on how fast they can grow. And the money they're willing to spend to solve it reflects that.

The Big Five hyperscalers (Amazon, Microsoft, Google, Meta, and Oracle) are projected to spend over $600 billion on infrastructure in 2026 alone, a 36% increase from 2025. Roughly 75% of that targets AI infrastructure directly. Capital intensity has reached over 50% of revenue for some companies — numbers that historically look more like a regulated utility than a technology business. Goldman Sachs projects the Big 5 will spend $1.15 trillion in total CapEx between 2025 and 2027. Maintaining this pace risks profitability if AI adoption lags, but pulling back means losing the race — so the spending continues.

The scale of individual projects makes the aggregate numbers concrete. When Mark Zuckerberg visited the White House, he showed Trump a proposed data center footprint overlaid on a map of Manhattan — miles long, miles wide, covering most of the island. Meta's Hyperion campus in rural Louisiana spans 2,250 acres of former farmland, carries a reported $50 billion price tag, and is designed to scale to 5 GW over several years.

Prometheus, Meta's first gigawatt-scale cluster, comes online in 2026 and will be the largest data center in the world when complete. Meta's capital expenditure guidance for 2025 sits at $72 billion — up 70% year-over-year — with Zuckerberg warning that 2026 spending will be "notably larger." In response, the Trump administration authorized AI companies to operate as their own private utilities, effectively clearing the way for hyperscalers to build and manage on-site generation independent of the traditional utility framework.

The Trump administration authorized AI companies to operate as their own private utilities, effectively clearing the way for hyperscalers to build and manage on-site generation.

At these capital levels, the cost of power becomes a secondary concern. What matters is availability, reliability, and speed of access. That calculus is reshaping how these companies procure energy.

PPAs: The Default Data Center Power Procurement Tool, and Its Limits

The Power Purchase Agreement became the dominant procurement mechanism for hyperscalers over the past decade, and the numbers reflect that clearly. In 2024, a record 68 GW of corporate clean energy PPAs were announced globally. Data centers drove nearly 60% of US corporate PPA volume that year, up from around 50% the prior year. Microsoft alone has contracted 34.7 GW of clean power — making it the largest corporate clean energy buyer in the world.

Indeed, technology companies have been the predominant PPA buyer over the last decade or more, ushering in an era of unending growth for renewable energy. A PPA's appeal is straightforward: it locks in a long-term price for electricity, typically 15–20 years, giving hyperscalers cost certainty, plus the renewable energy certificates needed to support net-zero commitments.

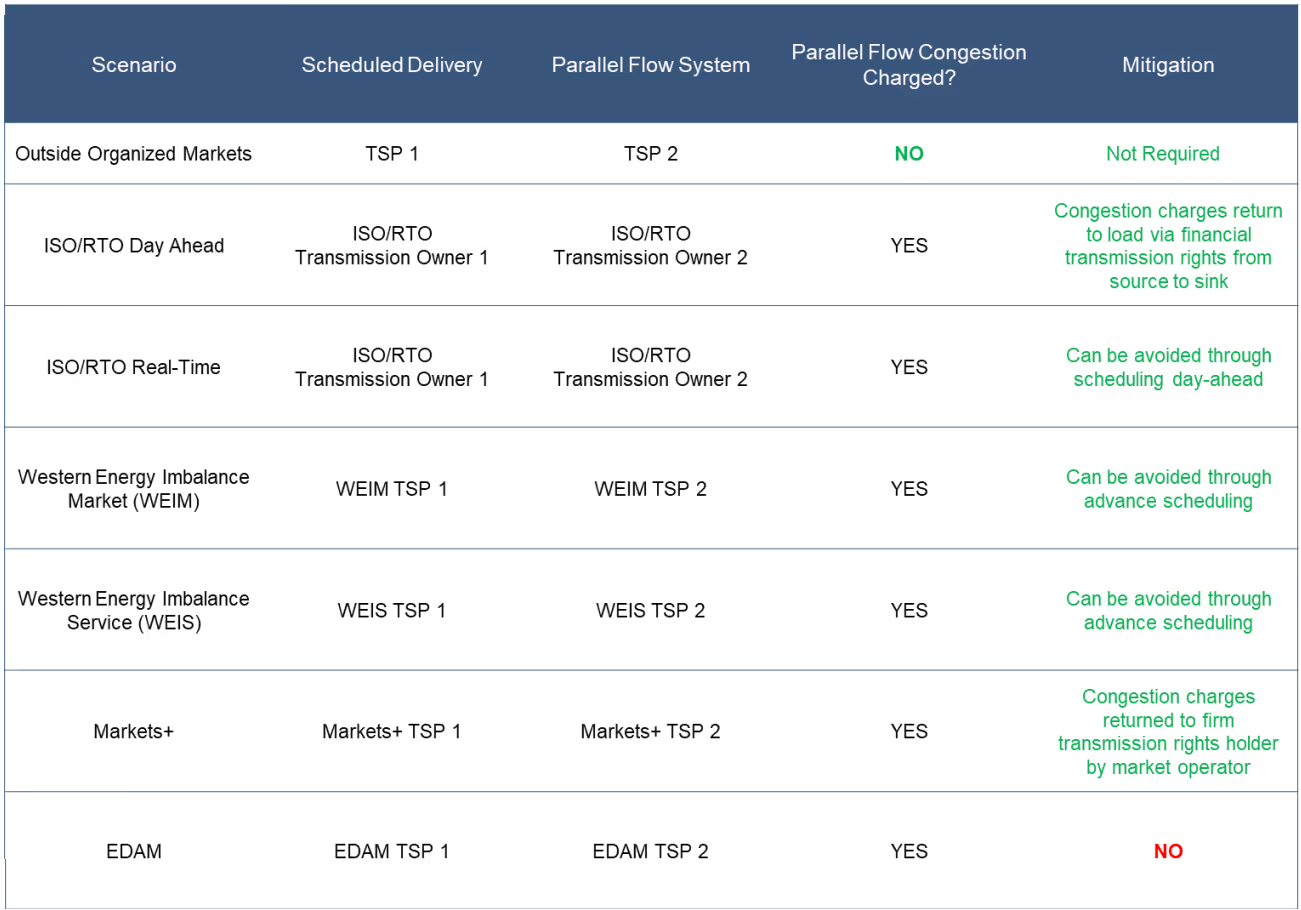

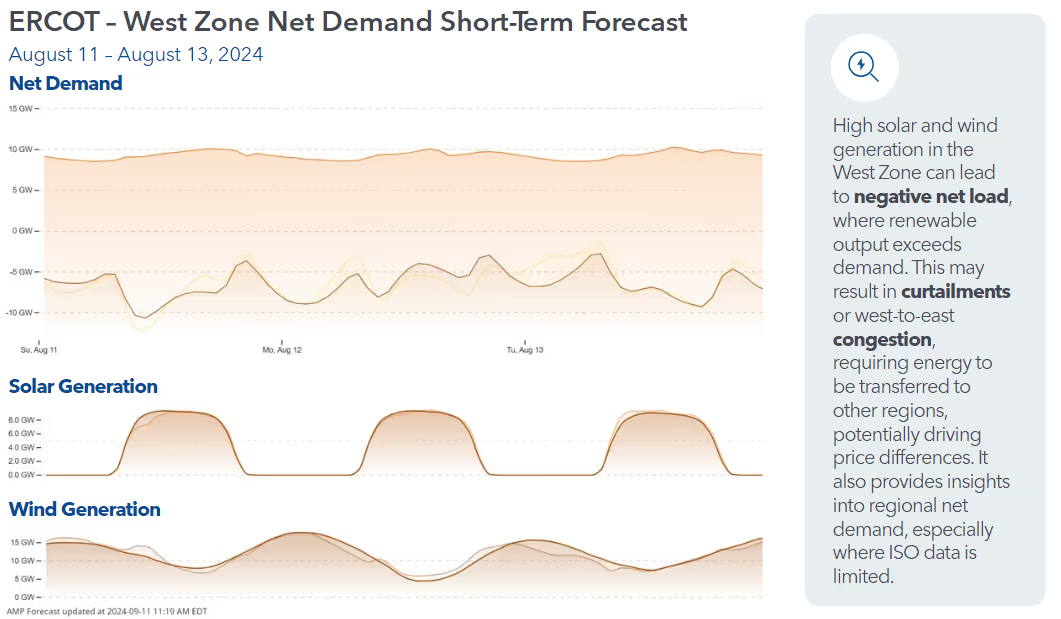

But PPAs have a structural flaw. The majority are tied to solar and wind assets, which are intermittent by nature. A PPA locks in a price — not a delivery guarantee. When a contracted solar project curtails because the grid is congested, the data center still owes the payment but receives no electrons. That mismatch is becoming harder to ignore as curtailment accelerates across US grids. In 2024, four of the seven US ISOs set annual records for curtailed renewable energy. In ERCOT alone, over 8 TWh of wind and solar was curtailed that year. Even PJM, historically a low-curtailment region, saw curtailments jump roughly sixfold.

When a contracted solar project curtails because the grid is congested, the data center still owes the payment but receives no electrons.

This is partly the hyperscalers' own doing. The same data center load growth pushing PPA signings to record levels is accelerating grid congestion — increasing the curtailment risk on the very contracts being signed. Transmission is the silent bottleneck: generation exists, but it can't always reach where it's needed.

The industry is adapting. Hybrid contracting — solar paired with battery storage — is growing fast, with 2 GW contracted in North America in 2024. And 56% of developers are now exploring co-located or on-site generation as a core strategy, not a fallback. But renewable energy and batteries are not enough to fill the need.

The Nuclear Option: When Price Stops Being the Barrier to Power Procurement

No moment better illustrates how hyperscalers think about power than the resurrection of Three Mile Island, the infamous nuclear power plant in Pennsylvania. Unit 1 at Three Mile Island operated safely for decades before being shut down in 2019. In the end, it wasn't a safety issue — it was an economic one. Cheap natural gas and subsidized renewables had made the plant uncompetitive, and Pennsylvania lawmakers declined to intervene. The reactor went dark.

Five years later, Constellation Energy announced it would restart the plant under a 20-year Power Purchase Agreement with Microsoft. Microsoft will purchase the plant's entire generating output — 835 MW — enough to power roughly 800,000 homes. Restarting requires significant investment in the turbine, generator, main power transformer, and cooling and control systems. MIT nuclear engineering professor Jacopo Buongiorno estimated the restart cost will run into the tens of billions. Microsoft will likely pay above-market rates for the power.

Microsoft will likely pay above-market rates for the power.

Three Mile Island is not a one-off. An AWS-Susquehanna deal further illustrates both the appetite for nuclear power and the regulatory complexity that comes with it. The companies signed a new 1,920 MW PPA running through 2042. Talen expects the contract to generate roughly $18 billion in revenue over its life. Meanwhile, Meta has issued an RFP for 1.4 GW of new nuclear capacity, and Google has signed agreements with small modular reactor developers.

The regulatory picture is still evolving. PJM's market monitor has warned that diverting existing nuclear output to serve data centers directly could have significant effects on wholesale energy and capacity markets — a concern that will only grow as more deals of this scale get structured.

But nuclear's appeal is simple: it produces carbon-free power around the clock, perfectly matching the load profile of a server farm in a way that wind and solar cannot. The question is no longer whether hyperscalers will pursue nuclear — it's whether the regulatory framework can keep up with the pace at which they're doing it.

Nuclear's appeal is simple: it produces carbon-free power around the clock, perfectly matching the load profile of a server farm in a way that wind and solar cannot.

Data Center Load Profiles: Why They’re Different

Understanding why hyperscalers are willing to pay a premium for firm power requires understanding what their load actually looks like. Data centers are high load factor, near-baseload consumers. Dominion Virginia reported an 82% load factor for large data centers, and Duke Energy plans for new large loads at 80%. Unlike most industrial or commercial loads, there's no meaningful morning ramp, no evening peak, no weekday-weekend variation. The servers run continuously at roughly the same level around the clock.

This is particularly true for AI training workloads, which have an especially flat consumption pattern. AI inference — running models for end users — introduces more variation, but the overall shape stays close to baseload. Data centers typically maintain utilization rates around 80%, leaving roughly 20% headroom that can theoretically be shifted.

That flexibility isn't wasted. Research modeling power systems in Texas and the Mid-Atlantic and Western regions found that flexible data centers reduce system costs by shifting load from peak to off-peak hours, flattening net demand and supporting renewable integration. Google's carbon-aware scheduler — which routes workloads toward hours when clean energy availability is highest — is the highest-profile proof of concept.

But the core load doesn't flex much. A facility running at 80% capacity factor, 24 hours a day, is a natural day-ahead buyer — it knows its consumption, can commit to it the night before, and has limited appetite for real-time market exposure. Downtime is expensive. Intermittency is not acceptable. That operational reality is what drives the procurement logic: when your load never stops, you need power that never stops either.

A facility running at 80% capacity factor, 24 hours a day, is a natural day-ahead buyer.

Why Data Centers Are Participating in Energy Markets

For now, hyperscalers remain primarily long-term contract buyers — their flat load profiles and 15–20 year PPA commitments leave little incentive to actively manage exposure in day-ahead or real-time markets. When you've already locked in power a decade out, short-term price signals matter less. But that may be starting to change.

In 2025, Meta formed a wholly-owned subsidiary called Atem Energy and filed with FERC for authorization to sell energy, capacity, and ancillary services at wholesale — effectively seeking to become a power marketer that can sell excess power it doesn't consume back into competitive markets. The move is a direct response to the scale of Meta's energy commitments: its Louisiana Hyperion campus alone will draw on 2.26 GW of combined-cycle gas generation built by Entergy, and the company has layered on top of that a 20-year, 1.1 GW nuclear PPA with Constellation Energy for its Clinton, Illinois plant, plus hundreds of megawatts in renewable agreements.

At that scale, active wholesale market participation starts to make financial sense — both to hedge renewable contracts and to monetize surplus generation. Whether other hyperscalers follow Meta's lead into wholesale markets remains to be seen. The structural argument against it is the same as ever: a load that runs flat around the clock, already hedged through long-term bilateral contracts, doesn't need the short-term flexibility that wholesale day-ahead and real-time (DA/RT) market participation provides. But as these companies' energy footprints grow toward multiple gigawatts per campus, the economics of active market participation will only become more compelling.

As these companies' energy footprints grow toward multiple gigawatts per campus, the economics of active market participation will only become more compelling.

While the regulatory framework is still evolving, the underlying pressures remain constant: AI-driven data center load keeps growing, just as renewable energy curtailment keeps rising. The grid was never designed to absorb demand at this speed or concentration. The natural next step — already signaled by Meta's Atem Energy filing — is deeper market participation: moving beyond bilateral contracts toward actively buying, selling, and hedging power in wholesale markets. Load-serving entities and other power traders, in turn, increasingly need to anticipate gigawatt-scale fluctuations in net load resulting from data center activity.

Explore Power Markets Trading Solutions

.svg)

%20(3).png)

%20(2).png)

%20(1).png)

.png)

.avif)

.avif)

.avif)

.avif)

.avif)

%20(15).avif)

.avif)

%20(10).avif)

.avif)

.avif)

.avif)

.avif)

.avif)